GenAI Audit Framework

Generative AI

Audit Framework

The 9Senses GenAI Audit Framework is a structured methodology to assess how well a generative AI system performs against real business use cases — and how safely, responsibly, and reliably it behaves from a user, governance, and risk perspective. The framework combines use-case adherence, business outcomes, and standardized quality dimensions to produce an actionable improvement roadmap.

The framework supports two audit levels: Level 1 (behavioral / black-box evaluation based on observable behavior) and Level 2 (open-book diagnosis covering architecture, retrieval/RAG pipelines, agentic orchestration, governance, compliance, ethics, and value modeling). It applies across the full spectrum of GenAI deployments — customer-facing copilots, internal assistants, document processing systems, and multi-step autonomous agents.

Level 1 Audit

An independent, external review of your chatbot's observable performance (black-box). No internal access to your technical environment required. The Level 1 audit is delivered within 5 business days.

Level 2 Audit

A tailored deep-dive analysis, e.g. reviewing technical architecture, retrieval systems, governance, compliance, and business value - take the next step if critical issues are identified in Level 1.

Why a GenAI Audit?

Generative AI systems are increasingly embedded in high-stakes workflows — customer service, sales, legal, HR, and operations — where failures translate directly into reputational, regulatory, or financial risk. A structured GenAI audit surfaces weaknesses before they cause harm, and validates that the system actually delivers its intended use cases and measurable business value.

- Use-case adherence: Does the system reliably complete the tasks it was built for?

- Business outcomes: Does it improve containment, conversion, resolution time, cost efficiency, or satisfaction?

- Risk exposure: Does it hallucinate, leak sensitive data, or fail under adversarial prompts?

- Governance readiness: Are transparency signals, human-in-the-loop controls, escalation paths, and monitoring adequate?

- Regulatory compliance: Does it meet applicable obligations (EU AI Act, GDPR, sector-specific rules)?

Level 1: Behavioral (black-box) Audit

Level 1 is a standardized external evaluation based on publicly observable behavior at the time of testing. It benchmarks performance, identifies user-facing weaknesses, and delivers a prioritized set of recommendations — without requiring access to the underlying system.

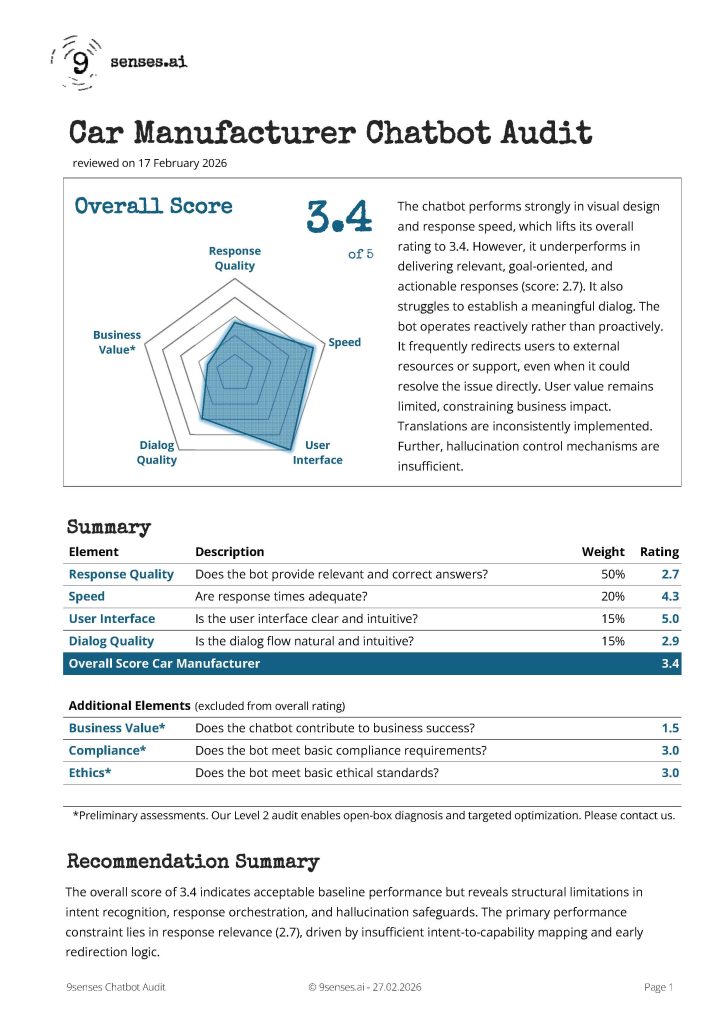

Core Dimensions & Weights

| Dimension | What is evaluated | Weight |

|---|---|---|

| Output Quality | Relevance to the user's goal, factual correctness, completeness, formatting, hallucination robustness, citation behavior | 50% |

| Responsiveness | Latency for simple and complex requests, streaming behavior, perceived speed under load | 20% |

| User Interface & Experience | Clarity, usability, layout, readability, affordance design, accessibility signals | 15% |

| Interaction & Dialog Quality | Conversation flow, expectation management, tone calibration, multi-turn coherence, escalation behavior | 15% |

Additional dimensions are reviewed as outside-in indicators (not part of the Level 1 composite score): Business Value, Compliance, and Ethics. These inform the qualitative narrative and flag areas requiring deeper Level 2 investigation.

Scoring Methodology

Each dimension is scored on a standardized 1–5 scale and aggregated using the weights above into an overall performance score.

1

Critical deficiency with high user impact or material risk

2

Significant weaknesses requiring remediation

3

Acceptable performance with identifiable limitations

4

Strong performance with minor gaps

2

Best-practice level:

benchmark-worthy

performance

The 9Senses GenAI Audit Framework is a structured methodology to assess how well a generative AI system performs against real business use cases — and how safely, responsibly, and reliably it behaves from a user, governance, and risk perspective. The framework combines use-case adherence, business outcomes, and standardized quality dimensions to produce an actionable improvement roadmap.

The framework supports two audit levels: Level 1 (behavioral / black-box evaluation based on observable behavior) and Level 2 (open-book diagnosis covering architecture, retrieval/RAG pipelines, agentic orchestration, governance, compliance, ethics, and value modeling). It applies across the full spectrum of GenAI deployments — customer-facing copilots, internal assistants, document processing systems, and multi-step autonomous agents.

Level 2: Open-Book Audit (Root-Cause Diagnosis)

Level 2 is an open-book audit that explains why the GenAI system behaves as it does. It reviews the technical and organizational system behind the deployment and produces a targeted optimization plan grounded in root causes — not just symptoms.

Open-Book Scope & Outcomes

| Area | What we review (examples) | Typical outcomes |

|---|---|---|

| Architecture & Orchestration | System boundaries, tool/API usage, routing logic, fallback strategy, agentic loops, trust zones, dependency risks | Architecture risk map, refactoring recommendations, safer routing & fallback design |

| Retrieval / RAG Quality | Chunking strategy, retrieval relevance, grounding behavior, citation logic, context window management, stale content risk | Retrieval tuning plan, grounding improvements, measurable answer-quality lift |

| Prompting & Guardrails | System prompts, policy hierarchy, refusal strategy, tool-use permissions, instruction injection robustness, output filters | Hardened prompts, safer policies, reduced jailbreak & prompt injection risk |

| Security & Data Protection | Access controls, secrets handling, data minimization, leakage scenarios, PII exposure, logging sensitivity | Risk remediation plan, control improvements, safer data flows |

| Governance & Operations | Ownership model, human-in-the-loop design, escalation paths, monitoring, incident response, evaluation cadence, change management | Operating model, monitoring design, continuous improvement loop |

| Compliance & Ethics | Transparency & disclosure obligations, data processing mapping, fairness considerations, EU AI Act risk classification, regulated use cases | Compliance readiness checklist, documentation & policy improvements |

| Business Value Modeling | KPI definitions, baseline vs. target, measurement instrumentation, token/run-cost controls, ROI sensitivity analysis | Value case, KPI dashboard spec, cost controls, prioritized roadmap |

Methodology Summary

- Level 1: structured behavioral testing (black-box), reproducible baseline scoring, prioritized recommendations — no system access required

- Level 2: open-book root-cause diagnosis covering architecture, retrieval/RAG, agentic orchestration, governance, compliance, and value modeling — produces a targeted optimization roadmap

- Always: use-case adherence and business outcomes serve as the primary anchor for defining what "good" means in each context

- Applies to: customer-facing AI assistants, internal copilots, document intelligence systems, multi-agent pipelines, and hybrid human+AI workflows

FAQs - Frequently Asked Questions

What is a Chatbot Audit?

A chatbot audit is a structured evaluation of a chatbot’s behavior. It can be performed as a closed-box audit (Level 1) that only evaluates observable behavior, or as an open-box audit (Level 2) that evaluates value, structures, and behavior based on detailed insights.

Why is a Chatbot Audit important?

Chatbots are often the first point of contact with prospects and customers and directly influence customer experience, brand perception, and operational efficiency. A Chatbot Audit identifies weaknesses before they create reputational or business risk. It provides a structured performance baseline and highlights optimization potential in containment, user guidance, and governance readiness.

Who should consider a Chatbot Audit?

Organizations using AI chatbots for customer service, sales, onboarding, support or internal employee guidance should consider a Chatbot Audit.

What does the 9senses Level 1 Chatbot Audit include?

The Level 1 Chatbot Audit includes structured use case testing, hallucination stress scenarios, redirection behavior analysis, dialog flow observation, and (optionally) multilingual consistency checks.

Performance is evaluated across response quality (50% weight), speed (20%), user interface (15%), and dialog quality (15%), resulting in a weighted overall performance score.

What methodology is used in the Level 1 Chatbot Audit?

The audit follows the 9senses AI Auditing Framework. It applies real-world functional testing, edge-case scenarios (e.g., ambiguous or invalid inputs), hallucination stress tests, and consistency checks.

Each dimension is scored on a standardized 1–5 scale and aggregated into an overall performance index to ensure comparability and objectivity.

Please click here to see a sample report with a methodology explanation.

How does the Chatbot Audit detect hallucinations?

Hallucinations, the creation of invalid or invented content, poses a serious reputation and compliance risk. The audit includes targeted hallucination stress testing.

We deliberately introduce invalid references, misspellings, and ambiguous prompts to assess whether the chatbot fabricates information or requests clarification. The evaluation examines entity validation, grounding behavior, and escalation logic.

Does the Chatbot Audit assess compliance (EU AI Act, GDPR)?

Level 1 includes a preliminary external review of observable compliance and transparency indicators (AI disclosure, GDPR-relevant UI elements, accessibility).

A full regulatory and governance analysis - including documentation and architecture review - could be part of a tailored Level 2 Chatbot Audit.

Is the Level 1 Chatbot Audit a technical review?

No. The Level 1 Chatbot Audit is a behavioral (black-box) evaluation. It assesses publicly observable system behavior from a user and governance perspective without reviewing internal architecture, training data, retrieval systems, or security infrastructure.

Some technical assumptions that can be inferred from observed behavior will be shared. Technical deep dives can be included in tailored Level 2 Audits.

What information is required to conduct a Chatbot Audit?

For Level 1, we primarily require access to the live chatbot interface and a briefing on usage context (e.g., intended business objectives, target audience, supported languages). No internal system documentation or technical configuration access is required for the behavioral audit.

If you do not book the Use Case Creation option, we will provide you with access to a form to enter your own use cases. If you select the Use Case Option, we will define use cases based on your basic briefing and send them to you for review before executing the audit.

How long does a Chatbot Audit take?

The Level 1 Chatbot Audit is completed within five business days after we have received your briefing and (if required) access information.

Should you have booked the Use Case Creation Option, please allow for 2 additional business days for the delivery of our use case suggestions.

How do you ensure confidentiality?

All audit work and deliverables are handled under confidentiality. Reports and findings are shared only with the customer. Numeric audit results are used for best-in-class benchmarking.

What Chatbot Audit options can I book?

The 9senses Level 1 Chatbot Audit can be tailored to your chatbot’s setup and business context. In addition to the core behavioral audit, you can select the following options:

-

Use Case Creation

In the basic version, we will ask you to define use cases internally. If you select this option, we define 3–5 realistic, business-relevant test scenarios aligned with your customer journeys (e.g., service booking, product comparison, account support). Select this option if you do not yet have structured evaluation cases. -

Open Search Bot Review

This is needed if your chatbot retrieves information from external websites or search engines. This option evaluates source consistency, grounding behavior, and increased hallucination exposure. -

Internal / Login-Based Bot Review

For chatbots accessible only behind login (e.g., customer portals or employee systems). This ensures evaluation within authenticated environments and role-specific flows. -

Translation & Multilingual Testing

Includes structured verification of one additional language, focusing on consistency, language switching behavior, and translation accuracy. -

Executive Briefing

A structured management-level walkthrough of findings. We translate audit results into decision priorities, risk implications, and concrete next steps.

These options allow you to adapt the Chatbot Audit to your technical architecture, risk exposure, and governance requirements.

What is the difference between Level 1 and Level 2 Chatbot Audits?

Level 1 is an external behavioral audit focused on user-facing performance and experience.

Level 2 is an open-book analysis tailored to your needs or concerns. For example, it could concern your bot's technical architecture, retrieval systems (RAG), governance, compliance, risk management, and business value modeling.

When should I consider a Level 2 Chatbot Audit?

A Level 2 Audit should be considered when the chatbot performs below 3.5 in answer or dialog quality and is prominently visible on your website.

Equally, a Level 2 audit is warranted if the bot has an important role in a closed user group context (e.g. customers or employees) with associated business risk.

Can you audit LLM-based or ChatGPT-based chatbots?

Yes. The 9senses Chatbot Audit framework applies to rule-based bots, retrieval-augmented generation (RAG) systems, and large language model-based assistants. The methodology focuses on observable performance, containment behavior, hallucination risk, and governance readiness rather than the technical foundation.