9senses on artificial intelligence

What AI really is...

When most of us at 9senses began working with what is now labeled Artificial Intelligence, we didn't use that term. Back then, we were talking about non-linear computing, fuzzy logic, heuristics, machine learning, among others.

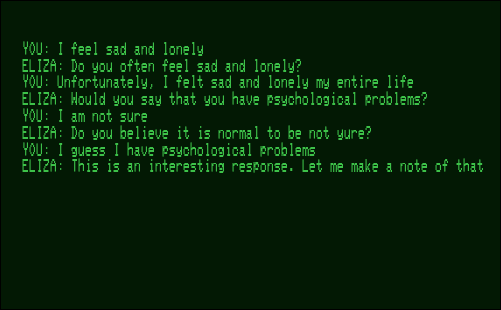

Today, many people think that AI makes computers as smart as humans. In reality, computer software is still far away from reaching that level, but it today is able to emulate and even surpass human capabilities in specific fields, particularly those that require the processing of large amounts of information or the generation of output from a large data pool. We would like to instill a bit of clarity here, at the cost of taking some of the magic of AI away, as did Joseph Weizenbaum, the legendary creator of Eliza:

“What I had not realized is that extremely short exposures to a relatively simple computer program could induce powerful delusional thinking in quite normal people.”

Joseph Weizenbaum (1923-2008), Inventor of Eliza

But what is AI really? We have asked two conversational AI systems about their definition of Artificial Intelligence and they came back with quite divergent answers. Click to see what AI has to say on AI

With two quite divergent statements from two AI systems, we are not afraid of creating our own answer. We at 9senses define AI as "a computer system that is able to react to an event it has never experienced before in a meaningful way that is adequate to that event, based on the analysis of many similar events from data." This ability clearly distinguishes it from traditional computer logic where each event (or combination of events) has only one defined reaction. We explicitly stay away from comparing it with humans, because in some areas, computers are still eons away from reaching our abilities, while in others, they massively outperform us.

ChatGPT answering “What is AI?”

Artificial Intelligence (AI) is the field of computer science focused on creating systems that can perform tasks that normally require human intelligence. AI enables machines to learn from experience, adapt to new inputs, and perform tasks like problem-solving, language understanding, perception, and decision-making.

AI can be broken down into several types and subfields:

- Narrow AI (or Weak AI) – AI systems designed for specific tasks, like voice recognition (e.g., Siri, Alexa), image recognition, or playing a game of chess. They excel at a narrow set of tasks but lack general awareness.

- General AI (or Strong AI) – Hypothetical AI that could perform any intellectual task a human can do, with the ability to reason, plan, and understand complex concepts. This level of AI does not yet exist.

- Machine Learning – A subset of AI that uses algorithms and statistical models to allow computers to learn from and make decisions based on data. For instance, machine learning is used in recommendation systems and predictive analytics.

- Deep Learning – A further subset of machine learning that uses artificial neural networks, inspired by the human brain, to analyze data and make decisions. Deep learning is commonly used in advanced image recognition, language processing, and autonomous driving.

- Natural Language Processing (NLP) – The branch of AI that focuses on enabling machines to understand, interpret, and respond to human language in a meaningful way.

AI is increasingly used in fields like healthcare, finance, automotive, education, and customer service, transforming how we interact with technology in everyday life

Gemini (Google) answering “What is AI?”

Artificial intelligence (AI) is a branch of computer science that aims to create intelligent agents, which are systems that can reason, learn, and act autonomously. AI encompasses a wide range of techniques and technologies, including machine learning, natural language processing, and computer vision.

Key Concepts in AI:

- Machine Learning: This involves training algorithms on large datasets to make predictions or decisions without being explicitly programmed.

- Natural Language Processing (NLP): This enables computers to understand and process human language, allowing for tasks like language translation and sentiment analysis.

- Computer Vision: This focuses on teaching computers to interpret and understand visual information from the world, such as images and videos.

Applications of AI:

AI is being used in a variety of fields, including:

- Healthcare: AI is used to develop new drugs, analyze medical images, and provide personalized treatment plans.

- Finance: AI is used for fraud detection, algorithmic trading, and customer service chatbots.

- Autonomous Vehicles: AI is used to develop self-driving cars and trucks.

- Entertainment: AI is used to create realistic computer graphics and generate personalized recommendations.

Key Fields of AI

There are various key AI technology areas, here is one of many ways to break it down:

Machine Learning

Finding patterns in large datasets and drawing conclusions is at the core of most AI applications these days. Machine Learning provides the statistical methods to make it happen.

Natural Language Processing

Being able to communicate with humans is one of the most recent key AI developments that helps interact with computers, for example in customer-facing IT.

Computer Vision

Finding items and differences in still or moving imagery is something that computers excel at, for example when it comes to surveillance, irregularity detection or simply - counting.

Robotics

Creating autonomous systems that perform physical actions, like driving a vehicle based on controlling equipment using sensor input and logic, is a key field of AI, albeit a difficult one.

Understanding patterns

Machine

Learning (ML)

At the core of many AI technologies (e.g. NLP, Computer Vision, Robotics) is what we call Machine Learning, the ability to use statistical data analysis to generate insights and apply these to new data. Or, as Wikipedia puts it: "Machine Learning is a field of study in artificial intelligence concerned with the development of statistical algorithms that can learn from data and generalize to unseen data; and thus perform tasks without explicit instructions."

Background (history)

Machine Learning goes back to the 1950s, when researchers developed algorithms that could recognize simple patterns in data. In the early 21st century, big data, powerful computing hardware (particularly GPUs), and improved algorithms made a lot of breakthroughs possible. Since then, ML has rapidly expanded into almost all applications.

How it works

Machine Learning systems are trained on datasets. During training, the algorithm makes predictions and compares them to other parts of the data, for example, related outcomes. This process, repeated many times, gradually improves accuracy. The final model can then make predictions on new, unseen data of the same kind.

For example, to build a system that predicts machine failures, operational data can be matched with certain failures. If the ML algorithm detects a pattern (for example higher temperatures, noises or changed efficiency that regularly precedes a certain failure), it can initiate maintenance before the machine actually breaks down.

Key concepts

Machine Learning is primarily about pattern recognition in mostly large datasets. Three main types of ML exist: supervised learning (from labeled examples), unsupervised learning (finding structure in unlabeled data), and reinforcement learning (by trial and error through feedback).

Modern ML often relies on artificial neural networks — mathematical systems loosely inspired by the human brain to improve performance by learning from experience.

What ML Can Do

Today, Machine Learning powers search engines, recommendation systems, voice assistants, fraud detection, image analysis, and autonomous robotic systems. It can identify tumors in scans, predict equipment failures in factories, translate languages, and generate realistic text, images, and even computer code. In many narrow tasks, ML systems now outperform humans in speed and accuracy.

ML is particularly strong at tasks involving large amounts of structured or unstructured data where it can uncover subtle patterns too complex for manual analysis. In scientific research, ML accelerates drug discovery and climate modeling. In business, it supports forecasting, customer segmentation, and process optimization.

The Challenges

Machine Learning has important limitations. It heavily depends on the quality and quantity of the underlying data to find the relevant statistical patterns. Bad data leads to incorrect outcomes.

As machine learning - like all "AI" - doesn't really understand the context on an abstract level, it needs clear guidance during training and reviews, often coupled with additional boundaries set by humans.

This becomes particularly difficult when the model itself is intransparent and becomes a "black box", where it remains unclear what patterns drive decisions made by ML. Thus, careful governance is essential, particularly in areas with high impact.

interacting with AI

Natural Language Processing (NLP)

There is nothing more fascinating than being able to converse with computers in normal language - asking questions and receiving meaningful answers. Expected for more than half a century, the first time this was realistically possible was only a few years ago with the arrival of the first large language models. When trying out ChatGPT, Gemini or others, the results are indeed fascinating, and more and more companies are beginning to use these LLMs for internal employee support and customer interactions.

Background (history)

NLP ideas originate back to the 1950s, with first attempts at rule-based machine translation. These early systems relied on manually written grammar rules and dictionaries, but progress was slow and limited by computing power and linguistic complexity.

In the 1990s and 2000s, NLP shifted toward statistical methods, using data to model language patterns. The real breakthrough came in the 2010s with deep learning and large language models, which dramatically improved tasks such as translation, speech recognition, and text generation.

How it works

An NLP system converts text into a numerical representation. This process breaks text into smaller units (such as words or subwords) and maps them into vectors in a multidimensional space. These vectors capture patterns of meaning based on how words appear together.

During training, the model predicts missing words, next words, or sentence structures and adjusts its internal parameters to reduce errors. Over time, it learns statistical relationships between words and phrases. When deployed, it uses these learned relationships to perform tasks such as understanding user input, answering questions, summarizing documents, or translating languages. Like all machine learning systems, it predicts probabilities, but does not truly understand language content and context as humans do.

Key concepts

NLP combines linguistics with machine learning. NLP systems analyze grammar (syntax), meaning (semantics), and sometimes intent (pragmatics).

Modern NLP relies heavily on machine learning models called transformers, which process language by analyzing relationships between words in a sentence, instead of relying on rigid grammar rules. These systems learn patterns from vast amounts of text.

Small and Large Language Models are initially trained on general language and reasoning, and for individual applications, fine tuned and often supported with specific data to be retrieved by the user.

What NLP Can Do

Current NLP systems can translate languages, summarize long texts, extract key information from documents, analyze sentiment in social media, and power conversational agents. They support customer service automation, legal document review, medical record analysis, and search engine optimization. In many cases, they dramatically reduce manual effort in text-heavy tasks.

Advanced large language models can generate essays, code, marketing content, and even simulate dialogue. Speech recognition and speech synthesis systems allow voice interaction with machines. In research, NLP helps analyze scientific publications at scale. While performance varies by task, NLP has reached a level where human-like language interaction is increasingly practical in real-world applications.

The Challenges

No matter how "human" they sound, NLP systems face important system-defined limitations. They can easily produce fluent but factually incorrect or misleading information. They also reproduce any falsehood or bias in the information available when trained.

As NLP systems are solely based on statistical patterns and have only limited contextual understanding, they can struggle with reasoning, and consistency. Also, they are solely based on the input provided during training and feedback during operations. This is particularly problematic with large open models.

Training large models requires significant resources and raises concerns about privacy, misinformation, and misuse. Human supervision and critical evaluation are thus essential.

recognizing things

Computer Vision

As formidably as the human eye collaborates with our brain and our muscles, today's AI tools can do a great job in processing visual information, particularly when it comes to recognizing certain patterns and spotting small differences that humans have a hard time to even notice. The biggest advantages are that computers can't get tired and don't have problems working in environments where humans are unsafe or uncomfortable.

Background (history)

Early work on Computer Vision began in the 1960s and 1970s, with programs to recognize simple shapes. These early systems relied on manually designed rules and were overwhelmed with complexity. One of the first implementations was the optical recognition of typed characters (OCR) to scan documents.

Progress accelerated in the 2000s with improved hardware, large datasets and machine learning. A major breakthrough came in 2012, when deep learning significantly improved recognition accuracy. Since then, Computer Vision has become a key application.

Key concepts

At its core, Computer Vision focuses on extracting meaningful information from visual data based on pixels represented by numerical values. Vision systems must detect patterns within these numbers to identify objects, shapes, textures, and - when comparing multiple images - movements.

Modern Computer Vision relies heavily on deep neural networks. Instead of relying on hand-written rules, these systems learn visual patterns directly from large collections of labeled images.

How it works

A Computer Vision system converts images into numerical data, typically on pixel level. During training, the model is shown many examples for what it has to deal with. It makes predictions, compares them with the correct labels, and adjusts internal parameters to reduce errors. Over many iterations, it learns which visual features correspond to certain objects or categories.

Once trained, the model can classify images, detect objects within images, or segment images into meaningful regions. For example, in object detection, the system identifies both what an object is and where it is located in the image. Like other machine learning systems, it does not “see” in a human sense but calculates probabilities based on learned visual patterns.

Increasingly, AI systems are used for image generation, the production of realistic but artificial and often misleading depictions of reality.

The Challenges

Despite their capabilities often surpassing human vision, Computer Vision systems have limitations. They struggle with unknown information, unfamiliar conditions, or overlapping objects. Equally, while they can even detect the smallest changes, learning what is relevant and what isn’t can be hard.

Another concern is bias, for example, when it comes to facial recognition. Often, due to biased training data, their accuracy varies across various ethnic groups. And ultimately, they do not “recognize” items, but only statistical patterns. This can create dangerous errors, for example in facial recognition. As with all tools, careful oversight and governance are required.

Additionally, the challenges emerging from image generation create entirely new ethical and regulatory problems.

What Computer Vision Can Do

Creating actions

Robotics

Creating autonomous systems that can perform physical actions, like driving a vehicle based on controlling equipment using sensor input and logic, is a key field of AI, albeit one that still is very difficult to master.

Background (history)

The field of Robotics is focused on designing, building, and operating machines that can perform physical tasks. After a lot of experimentation beginning in the 18th century, the first industrial robots were introduced in the 1960s, primarily for repetitive tasks in manufacturing, such as welding and assembly.

For decades, robots were mostly confined to industrial environments. Advances in computing power, sensors and machine learning have expanded their range and brought robotics into everyday life.

How it works

A robot operates in a continuous cycle of sensing, planning and acting. Sensors collect data about the environment - such as distance, position, temperature, or visual content. The control system processes this information and determines the appropriate action. The robot then executes the movement or task, often using motors or actuators.

In simple industrial robots, movements are pre-programmed and repeated precisely. In more advanced systems, machine learning and real-time feedback allow robots to adapt to changing conditions. For example, a warehouse robot may use sensors to avoid obstacles while navigating toward a target location.

Key concepts

At its core, Robotics combines mechanics (how a robot moves), electronics (how it is powered and controlled), and software (how it makes decisions). A robot typically consists of sensors to perceive its environment, actuators (such as motors or valves) to act, and a control system to coordinate actions.

Modern Robotics often integrates Machine Learning to enable perception, planning, and decision-making. For example, a robot may use computer vision to recognize objects, machine learning to improve performance over time, and motion planning algorithms to navigate safely. The goal is to create systems that can interact effectively with the changing physical world, either autonomously or under human supervision.

What Robotics Can Do

Robots are often used to perform repetitive, dangerous, or highly precise tasks. Increasingly, they are also used for specific tasks that require high precision.

Robots also vacuum homes, drive around, and explore dangerous or far-away spaces.

The Challenges

Robotics, particularly when it comes to robots navigating an open space like a road or terrain, is still a very difficult field, requiring significant sensing and computing power. Making an autonomous car drive under all conditions like a human driver is still a significant and expensive challenge. And creating humanoid robots that can walk and dextrously use their "hands" is still far away from reaching the ability even a small child has when it comes to processing sensory input and turning it into smooth and seamless motion.

Robots also require substantial engineering effort, maintenance, and safety considerations. Battery life, mechanical wear, and system failures can limit performance and create risk of physical damage to people and things.

Understanding these different AI approaches is essential when designing real-world AI systems. Each method has distinct strengths and limitations, which must be carefully considered when selecting the right approach for a given problem.

Unless you're curious about the history of AI and our more philosophical views, you can now easily skip the rest of this page and move to what we can offer you.

History of AI

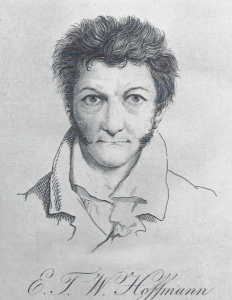

1816: Fiction

1

The idea of "automatons" acting "intelligent" is much older than computers themselves. For example, in ETA Hoffmann's "The Sandman", published in 1816, a beautiful girl named Olimpia is introduced. She dances and sings beautifully, but only speaks a few words. In fact, she is an automaton, created by physics professor Spalanzani.

1940s: The Turing Test

With the appearance of the first computers in the 1940s, the fascination with the technology quickly led to the idea that they could soon perform as intelligently as humans. In 1949, British mathematician and computer scientist Alan Turing devised a test to evaluate when a computer would be able to emulate a human conversation convincingly. It took 65 years for the first simulation to narrowly pass.

2

1966: Eliza

3

When American psychologist Joseph Weizenbaum created chat program "Eliza" in 1966, simulating the dialogue with a psychologist, it was meant like a playful first attempt at processing natural speech. Even though the logic behind it was very simple, many people considered it intelligent and expected computers to be able to speak like humans soon.

1980s: Machine Learning

With increasingly powerful computers and larger storage capabilities that were able to handle large datasets, the first successful machine learning approaches were introduced. They were based on the ability to autonomously find patterns in data, relate them back to certain events and conditions and suggest or take action.

4

1990s: Playing Chess (and Winning)

5

In 1997, IBM's Deep Blue supercomputer won its first match against acting chess champion Garry Kasparov. While mostly driven by sheer power which helped build its game on computing more than 200 million positions a second, it was using machine learning elements (heuristics and minimax optimization techniques) mid-game, which can be considered AI.

2000s: Seeing and Knowing

While optical character recognition (OCR) had been developed long ago, computers became capable of "seeing" in the late 20th and the early 21st century. This is when the first face and object recognition systems were developed. By now, AI is able to routinely identify people and objects and also understand what they are doing.

6

2010s: Solving Complex Problems

7

The previous decade was the era where all previous efforts in making computers act "intelligently" came together, and where many breakthroughs shifted public attention towards the term "Artificial Intelligence" again, after it had been rarely used since the 1970s. By 2010, normal desktop and laptop computers were strong enough to perform AI tasks.

2020s: Listening and Speaking

Finally, conversational AI is able to have conversations with humans based on Large Language Models that have been released. Those models are routinely able to pass the Turing Test, which means that they are able to understand and communicate back in natural language.

8

ChatGPT answering

"What is AI?"

Artificial Intelligence (AI) is the field of computer science focused on creating systems that can perform tasks that normally require human intelligence. AI enables machines to learn from experience, adapt to new inputs, and perform tasks like problem-solving, language understanding, perception, and decision-making.

AI can be broken down into several types and subfields:

- Narrow AI (or Weak AI) – AI systems designed for specific tasks, like voice recognition (e.g., Siri, Alexa), image recognition, or playing a game of chess. They excel at a narrow set of tasks but lack general awareness.

- General AI (or Strong AI) – Hypothetical AI that could perform any intellectual task a human can do, with the ability to reason, plan, and understand complex concepts. This level of AI does not yet exist.

- Machine Learning – A subset of AI that uses algorithms and statistical models to allow computers to learn from and make decisions based on data. For instance, machine learning is used in recommendation systems and predictive analytics.

- Deep Learning – A further subset of machine learning that uses artificial neural networks, inspired by the human brain, to analyze data and make decisions. Deep learning is commonly used in advanced image recognition, language processing, and autonomous driving.

- Natural Language Processing (NLP) – The branch of AI that focuses on enabling machines to understand, interpret, and respond to human language in a meaningful way.

AI is increasingly used in fields like healthcare, finance, automotive, education, and customer service, transforming how we interact with technology in everyday life.

Gemini answering

"What is AI?"

Artificial intelligence (AI) is a branch of computer science that aims to create intelligent agents, which are systems that can reason, learn, and act autonomously. AI encompasses a wide range of techniques and technologies, including machine learning, natural language processing, and computer vision.

Key Concepts in AI:

- Machine Learning: This involves training algorithms on large datasets to make predictions or decisions without being explicitly programmed.

- Natural Language Processing (NLP): This enables computers to understand and process human language, allowing for tasks like language translation and sentiment analysis.

- Computer Vision: This focuses on teaching computers to interpret and understand visual information from the world, such as images and videos.

Applications of AI:

AI is being used in a variety of fields, including:

- Healthcare: AI is used to develop new drugs, analyze medical images, and provide personalized treatment plans.

- Finance: AI is used for fraud detection, algorithmic trading, and customer service chatbots.

- Autonomous Vehicles: AI is used to develop self-driving cars and trucks.

- Entertainment: AI is used to create realistic computer graphics and generate personalized recommendations.